The internet arrived without a rulebook. Some organisations arrived without ethics. They exploited the freedom, ignored the harm, and pretended nothing was wrong. This piece sets out the excuses - and the reality that the mess is theirs to fix.

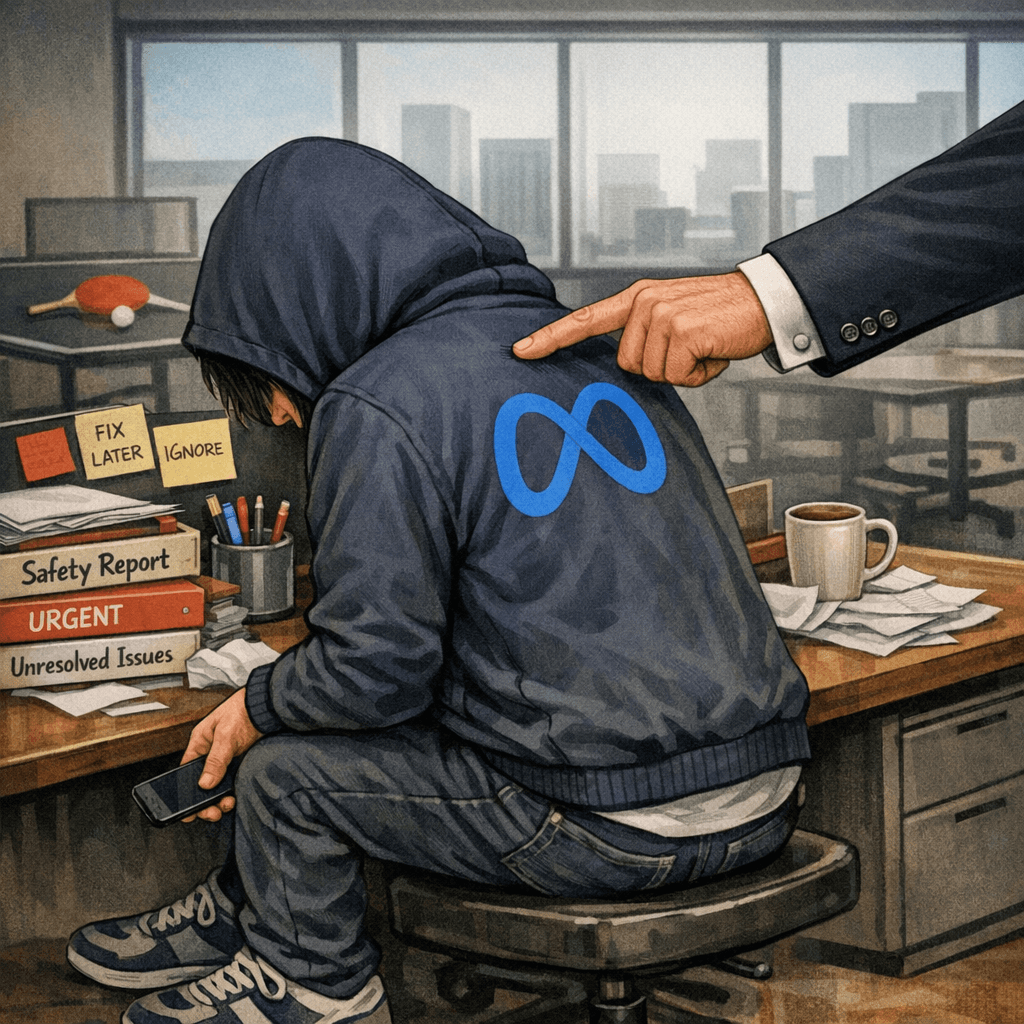

At this point, watching Meta explain why it “can’t” keep teenagers safe online is like watching a child who’s eaten too much candy and is now dramatically clutching their stomach on the floor.

So here’s your executive slap, Meta:pull yourself together, sit up straight, and stop pretending the world’s biggest tech company can’t manage basic safety.

Party’s over. You aren’t above the law.

“Oh no… my system can’t cope… too many users… too much responsibility…”

You’ve got to be joking.

Did they leave school early?

Skip the security and ethics classes?

Too busy playing table tennis in the office to attend “responsibility” week?

Because liquor shops manage it.

Banks manage it.

Pharmacies manage it.

Even the bargain‑bin vape shop with the flickering fluorescent light manages it.

But Meta — one of the biggest tech companies on Earth — pulls on a hoodie to hide behind and whispers,

“Security is just too hard.”

Spare Me - pleeease

Excuse #1: “We can’t verify age.”

Translation:

We can verify age. We just don’t want to, because children = engagement = money.

Meta can recognise your face from a blurry photo taken on a Nokia in 2009, but apparently can’t tell if someone is 12.

So let’s ask the obvious question:

Do you have your own children on the platform?

And if the answer is yes — what were the setup steps?

Did you:

- verify their identity

- verify their age

- verify their device

- verify their permissions

- verify their adult oversight

…or did you just let them wander in because “it’s too hard” to build the systems properly?

Because if your own kids are on there, and you’re fine with the current setup, then you’re also saying:

“Good enough for everyone else’s kids.”

Excuse #2: “We can’t confirm who’s using the device.”

Translation:

We know exactly who’s using the device — we just don’t want to be responsible for it.

Banks: “Here’s your two‑factor authentication.”

Meta: “What if… we just didn’t?”

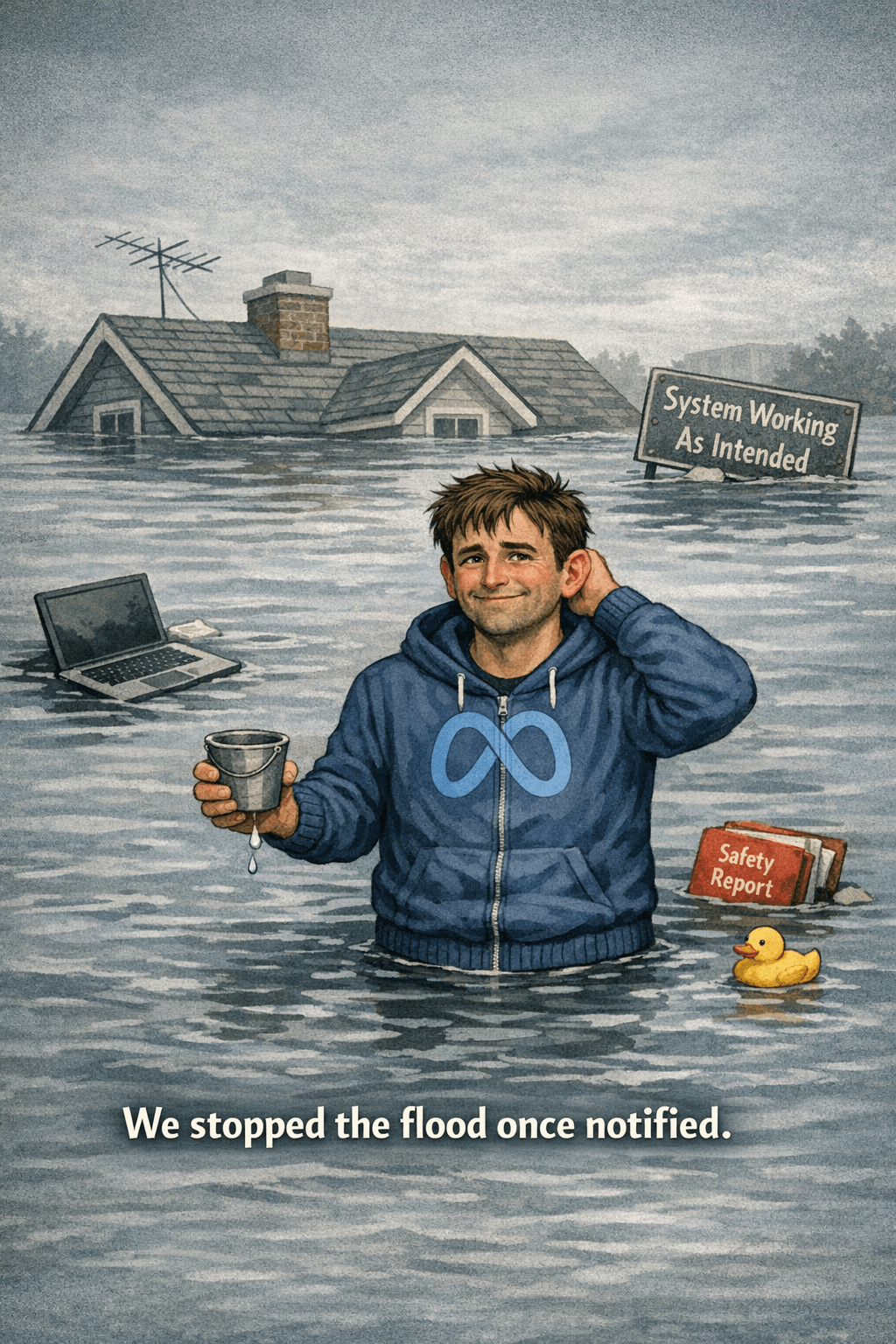

Excuse #3: “We removed the harmful content once notified.”

Translation:

We monetised the chaos first, then cleaned up when the lawyers started circling.

It’s like saying:

“I stopped the flood once the house was underwater.”

Excuse #4: “We can’t catch everything at scale.”

Translation:

We scaled irresponsibly and now want sympathy for the consequences.

Imagine a restaurant saying:

“We served too many customers today, so we can’t guarantee the food isn’t contaminated. Oopsie.”

No.

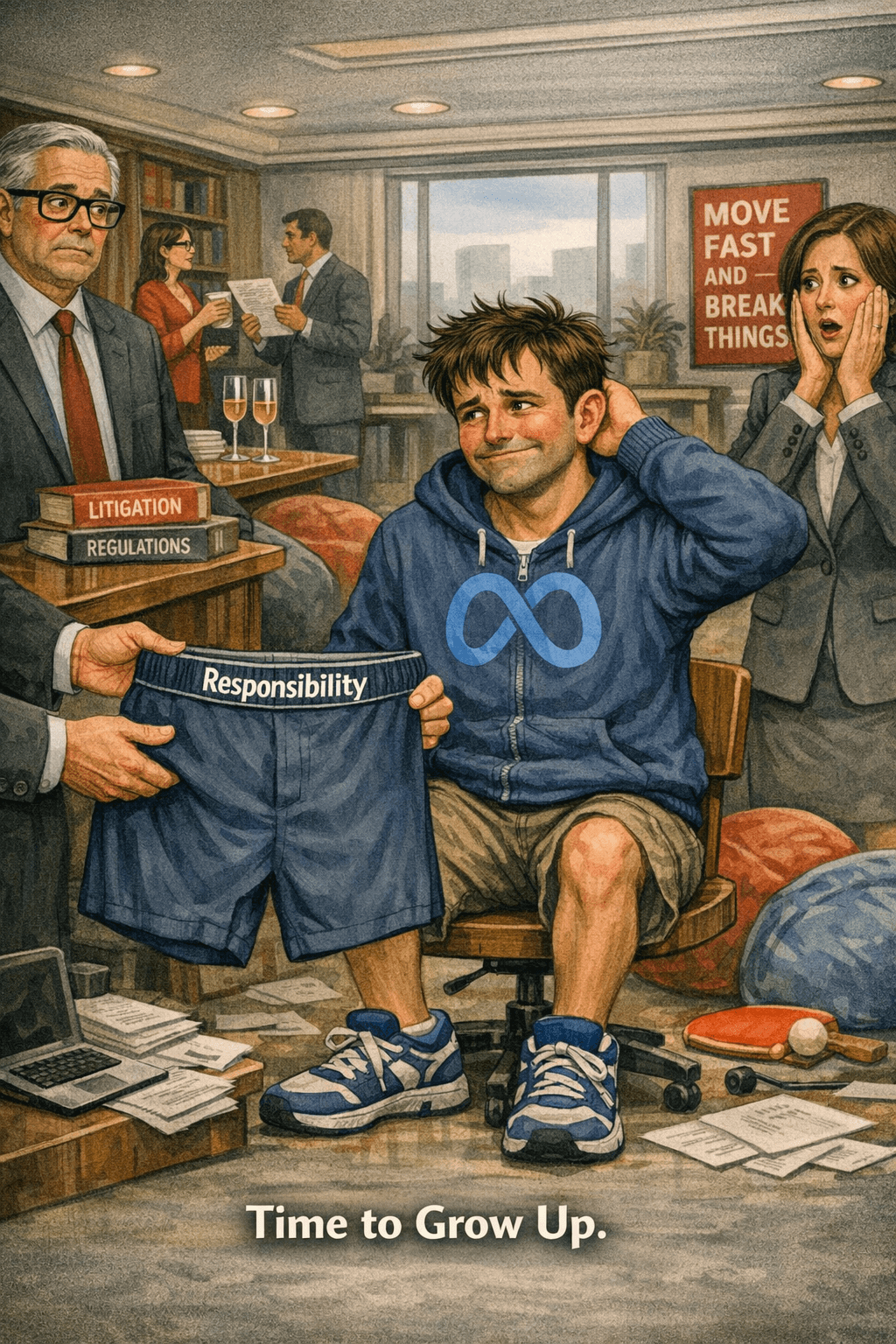

Grow up.

You’re not in the playground anymore.

Excuse #5: “We’re just a neutral platform.”

Translation:

We make money from ads — remember that question you laughed at?

Well guess what: that’s exactly what stops you being neutral.

This isn’t a charity platform.

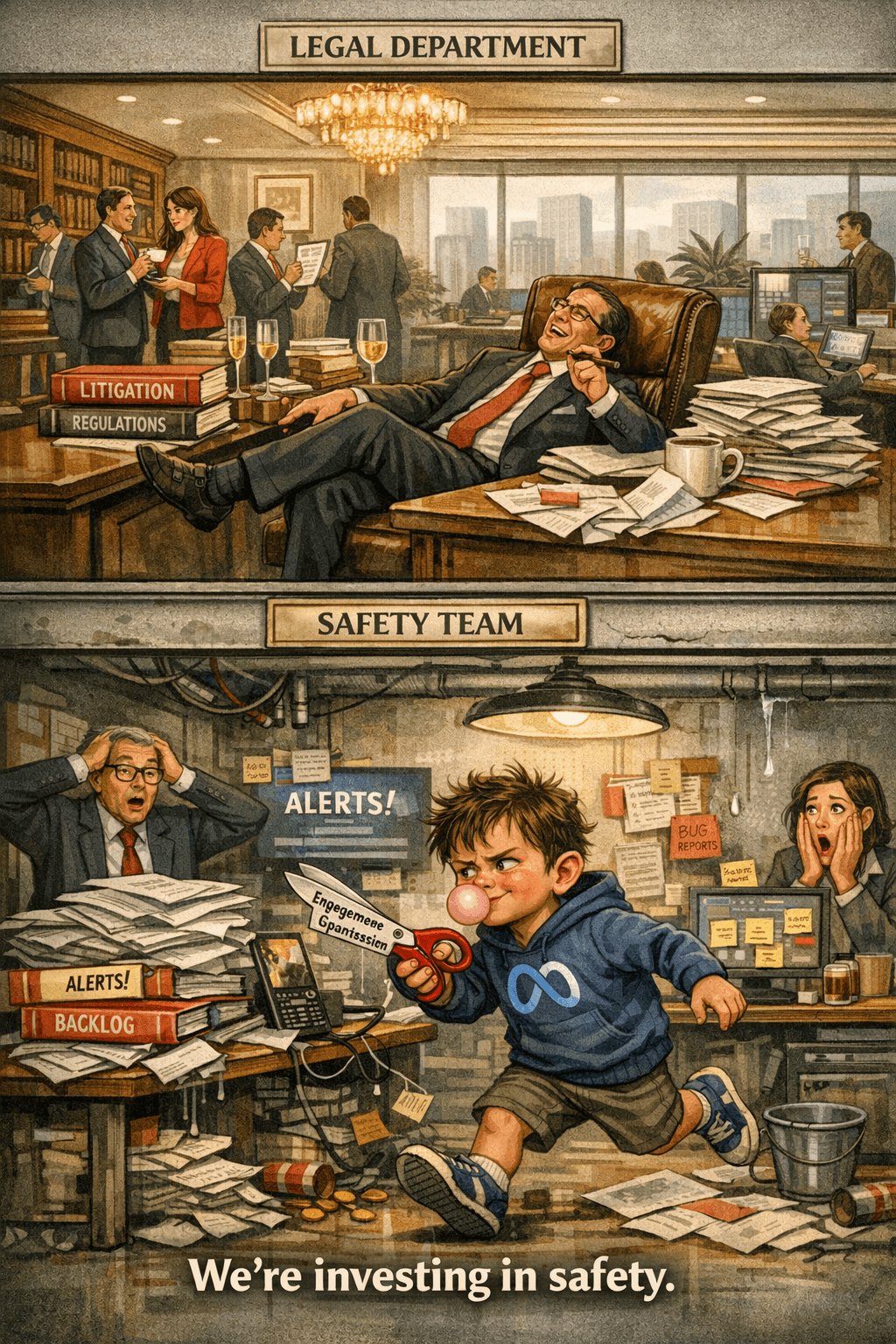

It’s a money‑making machine with an algorithm that behaves like a toddler with scissors.

Neutral platforms don’t auto‑recommend extremist content to minors.

Neutral platforms don’t push violent videos because they spike engagement.

Neutral platforms don’t behave like a slot machine with a PhD in manipulation.

Excuse #6: “We provide parental controls.”

Translation:

We built a maze, hid the entrance, removed the map, and then blamed the parents for getting lost.

Honestly, even a flat‑pack kit‑set from the bargain aisle comes with clearer instructions.

At least IKEA gives you a cartoon man pointing at the screws.

Meta gives you a 47‑page settings menu, three contradictory help articles, and a “good luck!” energy that feels like a prank.

If your safety system requires:

- a magnifying glass

- a YouTube tutorial

- and a séance

…it’s not a safety system.

It’s plausible deniability with a side of chaos.

Excuse #7: “We’re investing in safety.”

Translation:

We can afford a small army of lawyers, but apparently not enough qualified engineers to fix the actual problems.

Funny how the legal department is always fully staffed, but the safety team is “working on it.”

And here’s the real punchline:

Meta doesn’t want to change because the system is profitable exactly as it is.

The harm is lucrative.

The virality is intentional.

The frictionless onboarding is strategic.

The “we can’t verify age” routine is theatre.

So what if we speak their love language: money.

- $100,000 fine for every under‑18 account created without verified adult oversight.

- $5,000 per view of harmful content delivered to a minor.

- Instant, automatic, civil fines — like traffic infringements.

- Strict liability — no excuses, no device arguments, no “we didn’t know.”

Oh, you expanded too quickly to comply with local laws?

Sounds like a Meta problem.

The movie industry survives age restrictions.

Banks survive identity verification.

Pharmacies survive controlled‑substance rules.

Liquor shops survive ID checks.

But Meta?

Meta wants us to believe it’s all just too hard.

Time to put your big‑boy pants on —and time to grow up and behave responsibly.

They can offer excuses, minimise the harm, or pretend the problems are too complex to solve, but the pattern is the same. The behaviour is childlike; the consequences are not. Knowing better and doing nothing is no longer defensible.

Bought to you by MOV ITx — we move it, prove it, then multiply it.

These are the voyages of Random Circuits, boldly entering the arena of ideas that disrupt, challenge, and transform.

Created with input from myself and Copilot assistance